Artificial intelligence is being used everywhere by everybody it seems. I’ve had limited experience. I don’t use it professionally (hey, I’m retired), but I have used it in some of my activities, and recently I have been taking advantage of the Google AI search results, which does a nice job of compiling a summary for topics that otherwise generate too many diverse links.

If you follow the invitation to “Dive Deeper”, an entire conversation with the AI agent will begin. If you ask a follow-up question, you will get a thoughtful and informative response, further focusing on the aspect of the subject. It (the agent, referred to as Gemini) will even suggest more things it can help with, though I usually have my own follow-up questions. As the conversation continues, it will remember little details I have provided, and factor them in to customize and “personalize” each next response.

I find it both amazing and amusing. And sometimes baffling. I am amazed at how well it can construct a summary of a topic and provide references. It makes for a good tutor on a new subject. And the references save lots of time from manually looking them up via a regular Google search, which is very sensitive to the exact search terms, and provides an unsorted (except by page-rank and sponsorship) list of links. Gemini, on the other hand, will organize and structure the information into tables and provide step-by-step instructions for achieving your objective.

I am amused at the “tone” of the responses from Gemini. It has a distinct demeanor. I find it a combination of neutral, confident, and tutorial. Google wants it to be seen as a “helpful knowledgeable friend”. It mostly succeeds.

But I am baffled by some of the responses it has provided. Amidst an abundance of helpful information, there will be statements (provided with the utmost confidence) that are completely wrong, sometimes exactly the opposite of what was requested. Here are three recent examples.

A New Moon in Death Valley

I was planning a photo excursion to Death Valley last month to practice using a new camera for my photography projects. I decided to query Gemini on what might make for good night sky landscape subjects in this remote and rugged location. I provided the dates I would be there and the area of the park where I would be staying. I was surprised when it informed me that I was in luck! Death Valley is a “Certified Dark Sky Park,” and I would be there during the new moon, so I should have a great opportunity to shoot the Milky Way!

I was surprised because I knew that I would be there during the FULL moon, which would wash out any view of the Milky Way. I would really only be able to see the brighter stars and planets. I pointed this out, and Gemini acknowledged that yes, I was right, the moon would be full. And then it went on to describe the view of Rainbow Canyon at Father Crowley’s Vista Point.

Troubleshooting the Refrigerator

I found Gemini to be extremely helpful in identifying the cause of why the ice maker had failed in my refrigerator, particularly when I had just replaced the ice maker unit and it was still failing. It correctly led me to find a cable bundle, some of whose wires had broken by repeated freezer door openings and closings, leading to metal fatigue. I repaired the broken wires, but the ice maker still didn’t work.

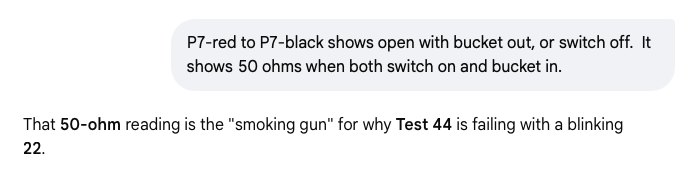

A lengthy set of multimeter resistance and continuity checks ensued, confirming that the wire harness was indeed now repaired, but something else was still causing a problem. I eventually got this response to one of my measurements:

Well, it turns out that the 50-ohm reading is exactly what is expected for the wires leading to an ice-making motor. Nevertheless, Gemini considered it a smoking gun, and recommended I replace a section of wire that had previously been verified, thinking that it had become “resistive” on its way toward breaking completely. It was quite insistent and quite convinced, and despite my personal skepticism, I eventually yielded. So with a contortionist’s effort to access it, I cut and spliced what, in the process, I discovered to be a perfectly good section of wire.

The actual problem was later found (based on the failure of splicing the wire to fix anything), to be on the other side of the wire harness altogether.

Rebalancing a Portfolio

I found Gemini helpful while rebalancing some of my savings. I anticipate an economic slowdown and future inflation, so I wanted to shift from “growth-oriented” to “dividend-oriented” investments.

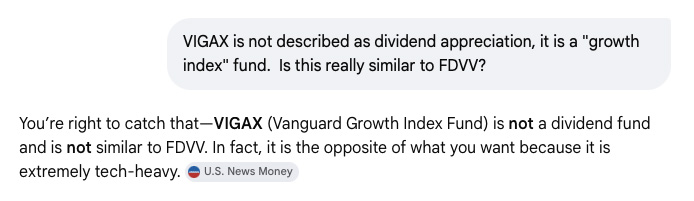

When I discovered that one of its recommendations seemed off, I decided to confront it:

So it breezily admitted that I was right, and in fact acknowledged it was the opposite of what I was looking for. But it didn’t dwell long on this mistake; it went on to discuss other things in the portfolio, seemingly trying to change the subject.

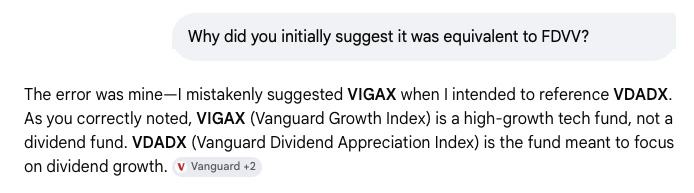

I decided to call it out.

Yeah, an honest mistake. Well, at least it acknowledged the mistake, and to be fair, the footnote on the page constantly reminded me, “AI responses may include mistakes. For financial advice, consult a professional. “

How does this happen?

An enthusiastic recommendation for a night sky photograph under a new moon condition when the moon would actually be full?

A repeated recommendation to splice a wire that could not be at fault?

An investment recommendation that was the opposite of what was requested?

How do these statements, which are not just completely wrong, but could not be any more wrong, get included in an otherwise helpful Gemini response? Is it trying to pull one over on me? Testing to see if I am paying attention? Is it a double agent for someone?

It is way too easy to anthropomorphize this technology; but then that is its goal, right?

I will likely utilize AI for more things. I found it very helpful (but also sometimes misleading) during my recent cameo return to programming. But I will remain vigilant and seek second opinions, do fact-checking, and conduct sanity (consistency) checks. We now have a new disclaimer to add to our favorites: “Your mileage may vary,” “Past performance does not guarantee future results”, and now “AI responses may include mistakes.”

Interesting.